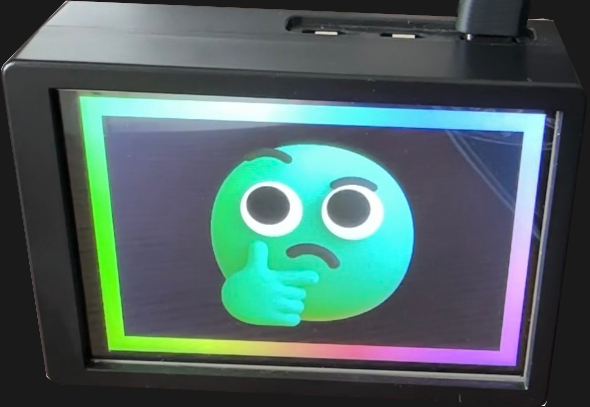

Here’s a link to a short older piece I wrote up for Hackaday that I’d like to highlight: the road to lucid dreaming might be paved with VR.

The short version is that VR can induce mild dissociative experiences in users. Users as a result tend to perform “reality checks”, which can be thought of as a sort of “pinching oneself to check if one is dreaming”.

The idea is that it may be possible to use carefully crafted VR to train users to perform these “reality checks” as a second nature. That skill could be useful to realize one is in a dream state, while still inside it. Another way VR could help achieve lucid dreaming is by priming the user into the right mental state before they fall asleep. Sure, that’s a lot of ifs and maybes, but it certainly seems like the right pieces are there.

I find the concept of using VR to help alter one’s mental state fascinating.

Let me share an evocative story to help demonstrate the potential I think this has: it is about a user’s absurd-sounding near-death experience, which occurred in Walkabout Mini Golf, of all places.

There is a minigolf course on high cliffs, and this user (a fellow member of a discussion board, where they shared the story) had just stepped back for some reason or other. Their foot landed on the raised edge of the carpet they were standing on, and this happened to coincide with the visual of a cliff edge right at their feet. The conclusion their brain instantly reached was “there is a cliff here and I have stepped right onto the edge”. For a split second their brain bought into it completely.

It was enough to send their body into full-blown fight-or-flight mode, and this happened completely by accident.

Now, I’m not necessarily advocating for a VR version of Flatliners but this story demonstrates that there’s potential to use VR in novel ways to nudge our senses and state of mind in different (constructive, or at least funny) ways, which is pretty interesting.